The exponential growth of AI models is hitting a memory wall, and Vsora’s Jotunn8 processor has been designed to solve this fundamental bottleneck.

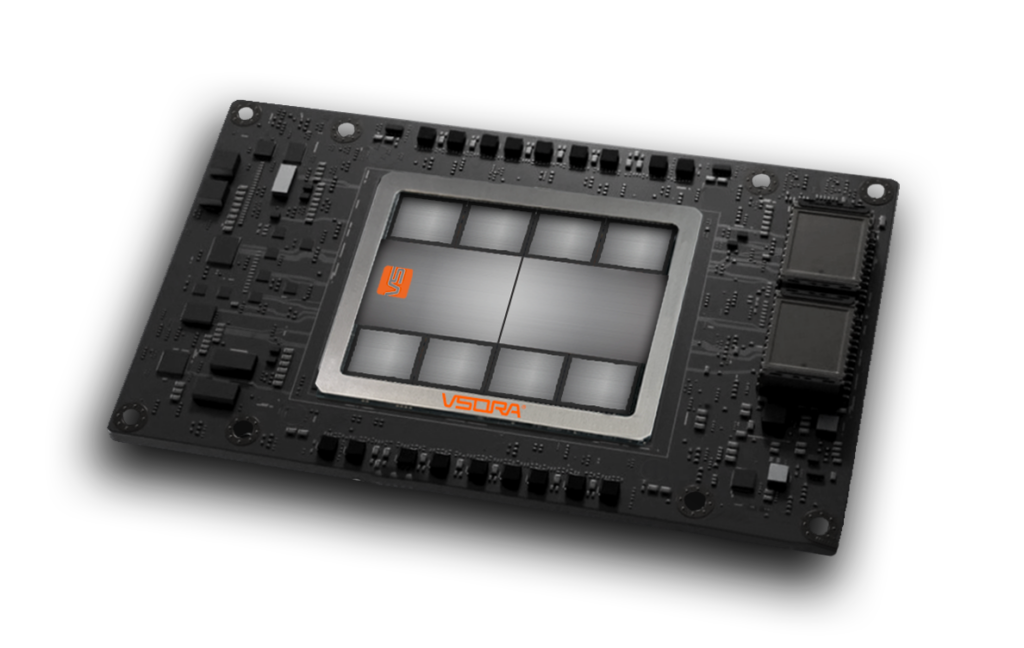

Paris-based fabless semiconductor company Vsora announced it has completed the tapeout of its Jotunn8 AI inference chip, marking the transition from design to fabrication. The chip, manufactured using TSMC’s 5-nm process and CoWoS multi-chip packaging, is aimed at large-scale AI inference workloads in data centers.

Development boards, reference designs, and servers are planned for early 2026, with full-volume production expected in the first quarter.

Memory wall bottleneck

Vsora’s Jotunn8 AI inference chip is built around a design that tackles the memory wall, a persistent bottleneck limiting computing performance. Traditional AI processors often lose performance because compute units stall while waiting for data, resulting in wasted performance and excessive power draw. Vsora claims its architecture rethinks that interaction.

While competitors such as Cerebras have developed architectures optimized for AI model training, Vsora’s Jotunn8 focuses exclusively on large-scale AI inference workloads. “Cerebras has built an impressive architecture, primarily optimized for AI model training—focusing on massive on-die compute density and very large, localized memory,” Jan Pantzar, Vsora’s VP of sales and marketing, told EE Times Europe. “Jotunn8, by contrast, has been designed from the ground up for large-scale AI inference, where efficiency, scalability, and memory access behavior are fundamentally different challenges.”

A single Jotunn8 delivers about 3,200 teraFLOPS of compute power coupled with 288 GB of HBM3e memory, the company claimed. According to Pantzar, its proprietary architecture overcomes the traditional memory wall by enabling continuous data flow to the compute units, eliminating the latency and bandwidth bottlenecks that typically constrain inference performance. “In a standard eight-chip Jotunn8 server configuration, this scales linearly to more than 25 petaFLOPS of sustained compute power and over 2.3 terabytes of high-bandwidth memory,” Pantzar said. “The result is training-class performance for inference workloads but at a fraction of the power and footprint.”

He added that the company’s inference focus reflects where long-term efficiency gains will be found: “While Cerebras targets the front end of AI model creation, Vsora’s Jotunn8 is engineered for the deployment phase, where models run, scale, and create value every day. We believe that’s where the next revolution in AI compute efficiency will happen, and that’s exactly what Jotunn8 enables.”

Efficiency and cost per token

Energy consumption has become a central metric in AI computing. “Sustainability is certainly an important outcome, but the real differentiator for our customers is efficiency, which directly translates into cost per token,” Pantzar said.

He emphasized that in large-scale inference, performance is defined not just by teraFLOPS but by how many tokens can be processed per second, per watt, and per dollar. “Compared with current-generation inference processors, Jotunn8 delivers more than 3× higher real-world throughput while consuming less than half the power,” he said. “This means customers can achieve the same output with one-sixth of the energy or get more than 6× the output within the same power envelope, both of which dramatically improve operational economics in data centers.”

In practical terms, Pantzar said this results in a massive reduction in cost per token, a metric that has become the industry’s most relevant performance benchmark. “It’s this combination of architectural efficiency and energy proportionality—not just absolute power savings—that defines Jotunn8’s sustainability and business advantage.”

Chiplet architecture

Vsora’s architecture uses a chiplet-based design built on a 2.5D silicon interposer, linking compute chiplets with high-capacity memory chiplets. Each compute chiplet integrates two Vsora compute cores, while each memory chiplet includes an HBM stack. The chiplets are interconnected through an ultra-low-latency, high-throughput network-on-chip fabric. In the Jotunn8, the company said, eight compute chiplets and eight HBM3e memory chiplets are arranged around the central interposer, delivering massive aggregate bandwidth and parallelism in a single package.

“From a manufacturing standpoint, chiplets deliver significant benefits,” Pantzar said. “As wafer yields do not scale linearly with die size, breaking the design into optimized chiplets dramatically improves overall yield and reduces manufacturing cost. In Jotunn8’s case, this translates into a silicon surface area reduction of roughly 30% to 40%, compared with an equivalent monolithic implementation, while maintaining full architectural coherence and bandwidth efficiency across all compute units.”

The result is a package that combines the performance of a large-scale processor with the cost efficiency and scalability of modular silicon, “an essential advantage for deploying AI inference economically at data center scale.”

Data center AI inference

In its latest report, titled “Data Center Semiconductor Trends 2025,” Yole Group wrote, “In 2024, the total semiconductor total addressable market for data centers reached $209 billion, spanning compute, memory, networking, and power. By 2030, that figure is projected to grow to nearly $500 billion.” AI and HPC have become the primary use cases, with generative AI alone driving significant shifts in demand for processors and accelerators.

“Yole’s analysis reflects very well what we are seeing in the market,” Pantzar said. “The acceleration of AI adoption across data centers, and particularly the shift from training to large-scale inference, is transforming the semiconductor landscape. We agree that the total addressable market for data center silicon is expanding rapidly, and that inference will soon represent the dominant share of deployed compute capacity.”

Pantzar added that, from Vsora’s perspective, “this trend fully validates our roadmap,” noting that the company’s focus “has always been on solving the critical bottlenecks that limit AI inference at scale—namely, performance, efficiency, and scalability.

“The broader market projections, including those from Yole, reinforce our conviction that inference is not just the next phase of AI compute but the foundation for its industrialization,” he added. “Our objective is to ensure that Vsora’s technology enables that transformation efficiently and at scale.”

Jotunn8 was designed from the start to scale across the full range of AI infrastructure, from hyperscale data centers to specialized enterprise environments. “We believe that the greatest early adoption will come from cloud and data center operators running large-scale inference workloads, particularly those supporting generative AI, search, and recommendation engines, where energy efficiency and cost per token are critical drivers,” Pantzar said.

When asked whether Jotunn8 meets the stringent demands of autonomous driving, he explained that while the architecture demonstrates the performance characteristics required for real-time inference, it was not designed to meet the specific cost, memory, and environmental constraints of automotive systems.

“For autonomous driving and edge AI, those requirements are addressed by our Tyr family of processors, based on the same chiplets as Jotunn8, with less memory and a slightly different design, ensuring they meet ISO 26262 and ASIL safety standards, low-latency real-time performance, and automotive-grade reliability,” Pantzar said. “In short, Jotunn8 powers large-scale cloud inference, while Tyr brings that same intelligence safely to the edge, from vehicles to embedded systems. Together, they form a unified compute roadmap from data center to road.”

From tapeout to market readiness

Pantzar confirmed that the Jotunn8 roadmap is progressing according to plan: “Vsora is very much on schedule. The tapeout of Jotunn8 confirms that our design-to-silicon execution is fully on plan, with the start of production set for the first quarter of 2026.”

The €40 million funding round, completed in April, has enabled the company to move through the tapeout and early manufacturing phases. “It covers the start of chip manufacturing, as well as the development of the OCP Accelerator Module boards, Universal Baseboard boards, and server configurations that will support early deployment,” Pantzar said.

He added that the company is already working with customers: “Our customers, who are already engaged with us, will be able to evaluate and integrate Jotunn8 in real-world AI inference environments as soon as hardware becomes available.”

Vsora is already preparing for its next stage of growth and expects to secure further investment. “As we move toward volume ramp-up and next-generation development in the second half of 2026, we anticipate securing additional strategic funding to support both scaling and innovation,” Pantzar said.

Sovereign AI

Vsora’s announcement comes amid broader European efforts to strengthen domestic capabilities in AI and high-performance computing. France-based SiPearl, for instance, recently announced the tapeout of Rhea1, its first-generation processor designed to power Jupiter, Europe’s first exascale supercomputer.

SiPearl’s Rhea1 and Vsora’s Jotunn8 indicate that Europe is not just consuming AI hardware but building it, underscoring the value of European design and manufacturing. Pantzar said Vsora sees AI and advanced computing as truly global endeavors, built on collaboration, innovation, and shared progress. Yet “the concept of technological sovereignty is very real, and Europe’s ability to design and produce high-performance compute solutions is strategically important, not only for Europe but for the resilience of the global AI ecosystem as a whole.”

Pantzar added that Europe’s engineering base remains a valuable asset: “Europe has tremendous talent, research capability, and industrial know-how, and regaining a strong position in AI hardware ensures that these assets contribute meaningfully to the next wave of global innovation.”

Vsora’s ambition, he concluded, is to combine European engineering strength with a global reach. “We work with partners and customers across the world, but our roots in Europe give us a unique role, proving that European technology can compete at the very highest level in AI computing. That combination of global collaboration and regional capability is, in our view, the best path forward for a sustainable and competitive AI future.”