Source: Embedded Computing Design

Design in any discipline – electronics, mechanical, aerospace, etc. – begins with a specification that captures what the end product should do and essentially drives the entire development cycle. In the early stages of development, the first task uses the specification to verify the design under development works correctly and is error-free. Then when all the parts of a design are assembled into a full system, the second task uses the specification to determine if the system performs in the way it was intended too.

The two tasks are called design verification (task 1) and design validation (task 2). Sometimes, erroneously, the two terms are used interchangeably. While similar, the two tasks have very different objectives.

- Verification: Are we building the system right?

- Validation: Are we building the right system?

In the system-on-chip (SoC) design process, a software-based, hardware description language (HDL) simulation approach is used for design verification. Conversely, design validation is carried out on a prototype of the whole system tested in the context of real-world use.

Unfortunately, HDL simulation execution speeds have not kept pace with device complexity despite all of its advantages: ease of use, flexibility and fast design iteration time. Many current day designs, for example internet routers with 1,024 ports or high-definition video processors, require massive verification sequences that take many years to simulate even on the fastest PC. These sequences stem from the need to run long, contiguous serial protocol streams or to process complex embedded software to fully verify the SoC or system design.

In addition, starting software validation ahead of silicon availability has become important in recent years. A new type of approach called virtual prototyping was introduced with the goal of doing this. While some of these tools have achieved the aim of jump-starting software development, they only address application programs that don’t require an accurate representation of the underling hardware. They fall short when testing the interaction of embedded software, such as firmware, device drivers, operating systems and diagnostics. For this testing, embedded software developers rely on an accurate model of the hardware to validate their code.

In contrast, hardware designers need a fairly complete set of software to fully test their SoCs during system validation. The age-old approach to system prototyping based on FPGA-based boards provides an accurate representation of the design, but is not well suited for hardware debugging. As a result, FPGA prototypes appeal more to software development teams as long as their designs fit into a few FPGAs.

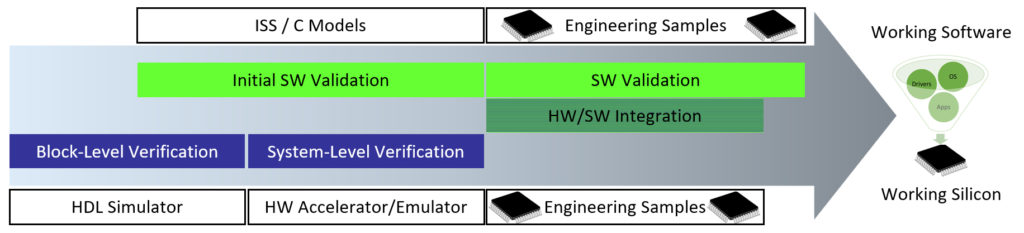

Eventually, both software and hardware groups need to come together on a common model to verify the complete hardware and the embedded software. For most using a traditional development cycle, the first complete model is actual silicon (Figure 1).

[Figure 1 | In a traditional development cycle, the first complete model is silicon.]

The problem with waiting for actual silicon is that it is too late in the design cycle. Because the embedded software can’t be fully validated in the context of a complete, accurate system model until silicon, there is an increased probability that problems will be found in silicon. They could be found in the software or in both the software and the hardware, often forcing additional silicon respins and code revisions. Both respins and code revisions have cost and time-to-market implications. What’s needed to avoid these implications is an approach that delivers a unified solution to enable hardware/software verification and validation well ahead of first silicon.

The latest generation of hardware emulators achieve just that. They offer virtually unlimited capacity, up to several billion gates and verify the design under test (DUT) at a speed of one or more megahertz providing considerably better hardware debugging than FPGA prototyping systems. They are easy to use, compile the DUT faster and allow remote 24/7 access from anywhere in the world. New software applications running on the emulator make it possible for them to support several types of verification, from low power analysis and verification to design for test (DFT) logic verification. Emulators also bring unique technology to a wide variety of market segments, from networking to processor/graphics, storage and so on.

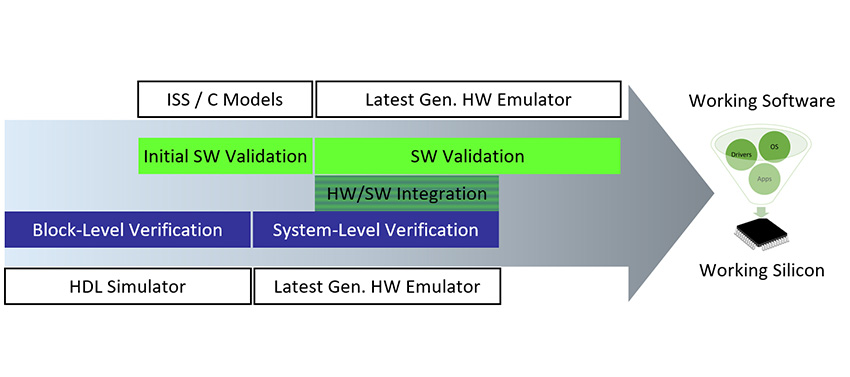

Early in the design cycle, emulators are used for co-emulation with simulators and SystemVerilog to verify intellectual property (IP) blocks and subsystems prior to assembling a complete SoC design. Later in the design cycle, emulators are used to validate the entire system and perform embedded software validation.

They provide full hardware and software debugging capabilities to both hardware and software engineers on the same design representation. This lets hardware and software development groups collaborate and fix integration issues in a way that was not possible before (Figure 2).

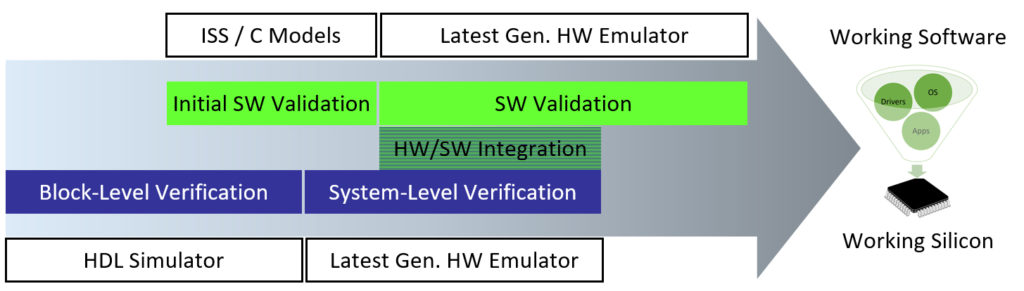

[Figure 2 | The latest generation of hardware emulators significantly accelerates the development cycle.]

Hardware emulation, formerly limited to the verification of very large designs, is today the foundation of all design verification and validation flows. This new-found popularity is the result of growing silicon complexity and widespread use of embedded software. In a design center, hardware emulation is used, and in the future it will be used even more so across the entire development cycle from hardware verification, hardware/software integration to embedded software and system validation.

Dr. Lauro Rizzatti is a verification consultant and industry expert on hardware emulation. Previously, Dr. Rizzatti held positions in management, product marketing, technical marketing and engineering.